Learning in the Age of Algorithmic Video

Are children learning anything from TikTok and YouTube?

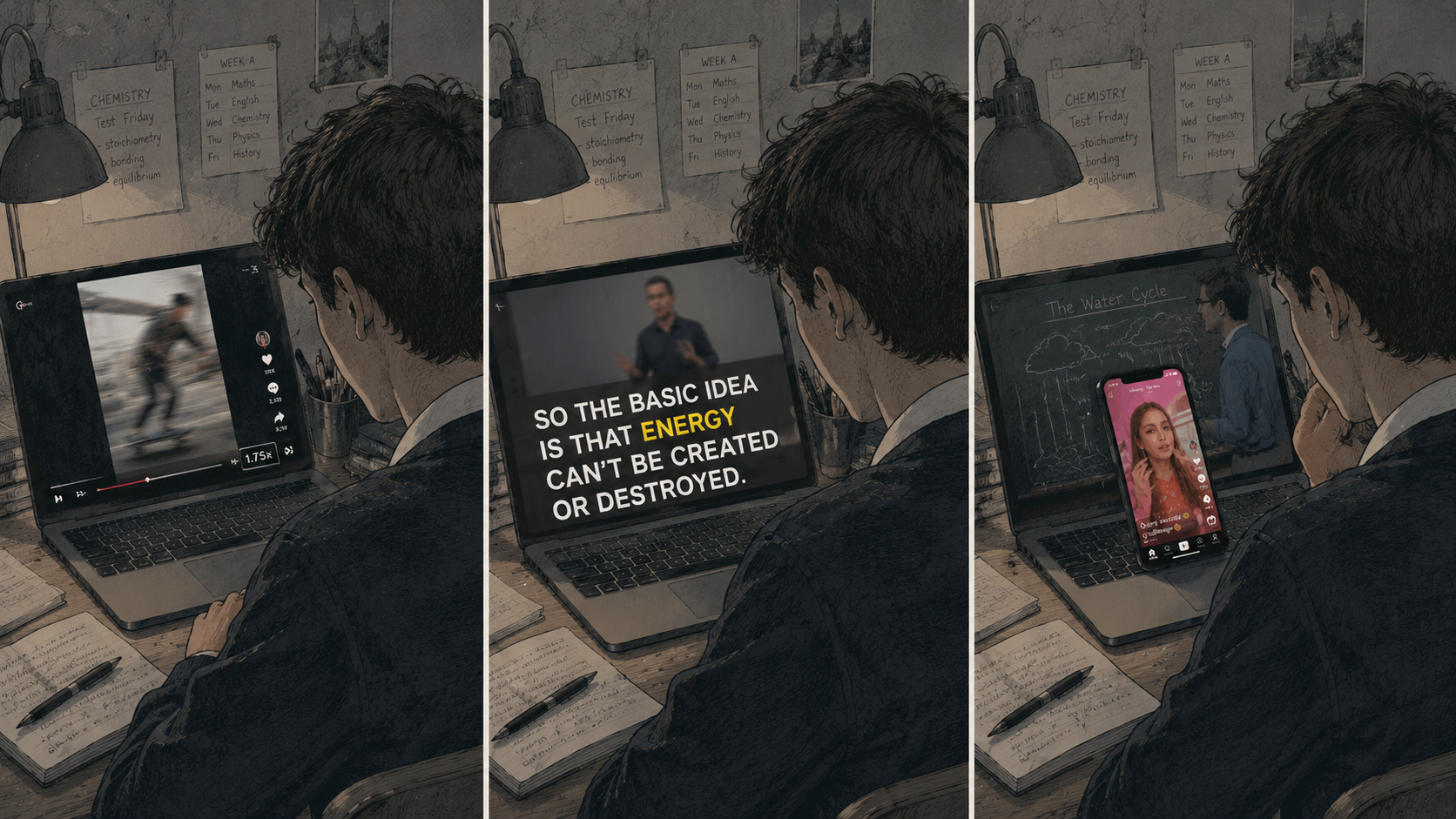

Watch a teenager watch a video in 2026. Chances are it is playing at 1.75x speed, with subtitles on by default, on a phone propped against a textbook, while ChatGPT sits in another tab waiting to summarise the bits they didn't catch. The video she is watching is, increasingly, not made by anyone in particular: it is delivered by an AI generated avatar and trained to look engaging within the first 1.2 seconds. This is now the dominant form of media that children are consuming.

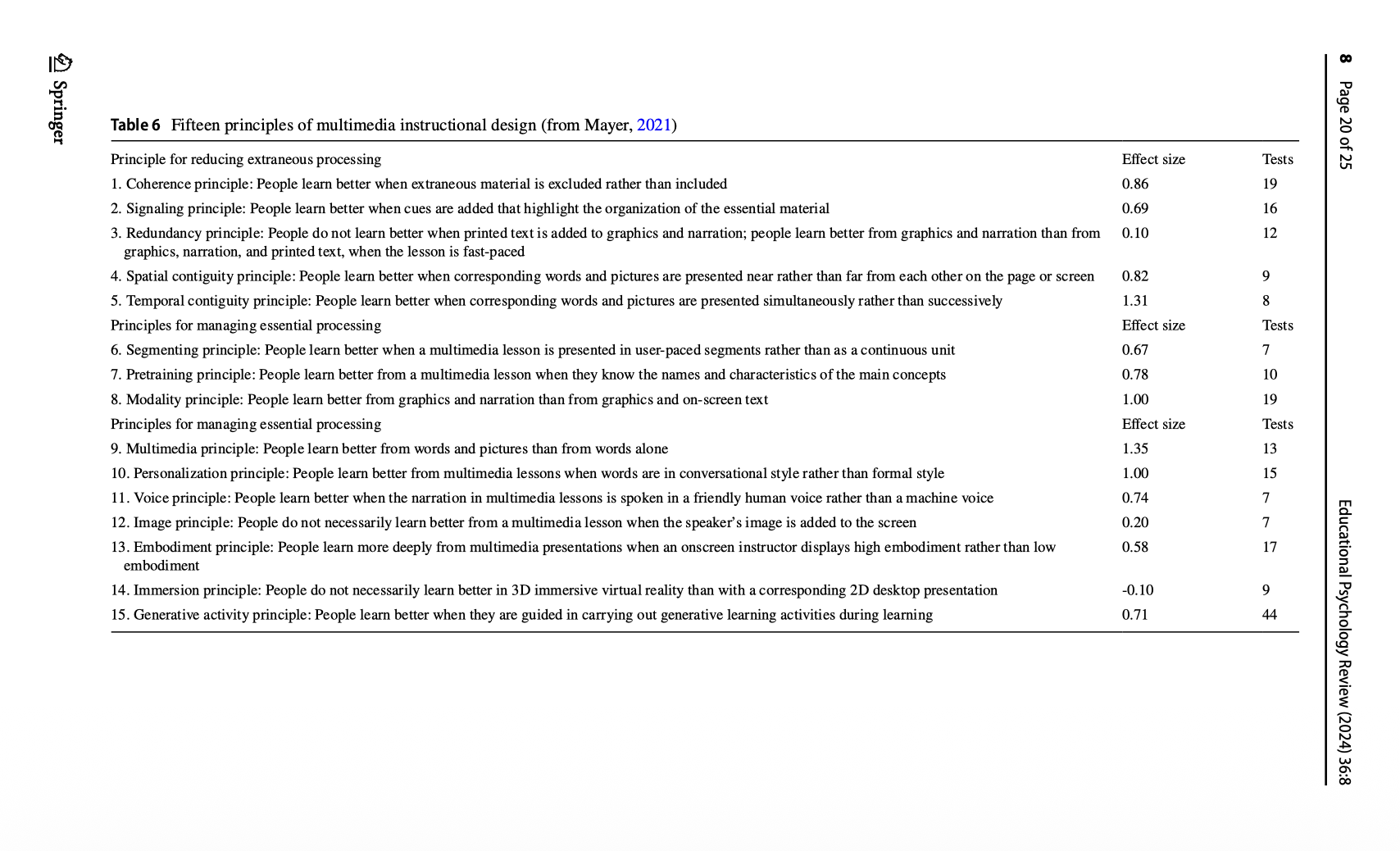

And yet the research we have to make sense of this was conducted on a learner who has since been replaced. Rich Mayer's seminal work on multimedia theory is some of the most carefully replicated research in educational psychology. What has changed however, are the conditions in which those primitives now operate. The principles still hold; but the boundary conditions under which they were established are no longer the conditions students are in.

That leaves educators, parents and designers in an unusual position: relying on a research base whose validity has visibly decayed, and where the boundary conditions of learning have dramatically shifted on two axes; student behaviour + video format. Then there is the wildcard of AI, which is less a third axis than an accelerant on the first two: changing how students engage with video, and changing what video itself is. How should we design learning with video in mind and what should parents need to know?

Things have changed much more than we think

Last week, the Wall Street Journal published an investigation into YouTube in American classrooms which is shocking on many levels. One mother in Wichita noticed her seventh-grader had developed a surprisingly detailed knowledge of a video game she'd banned at home. When she checked his school Google account, she discovered he had watched more than 13,000 YouTube videos on his school Chromebook in three months, many of them violent, some sexually suggestive.

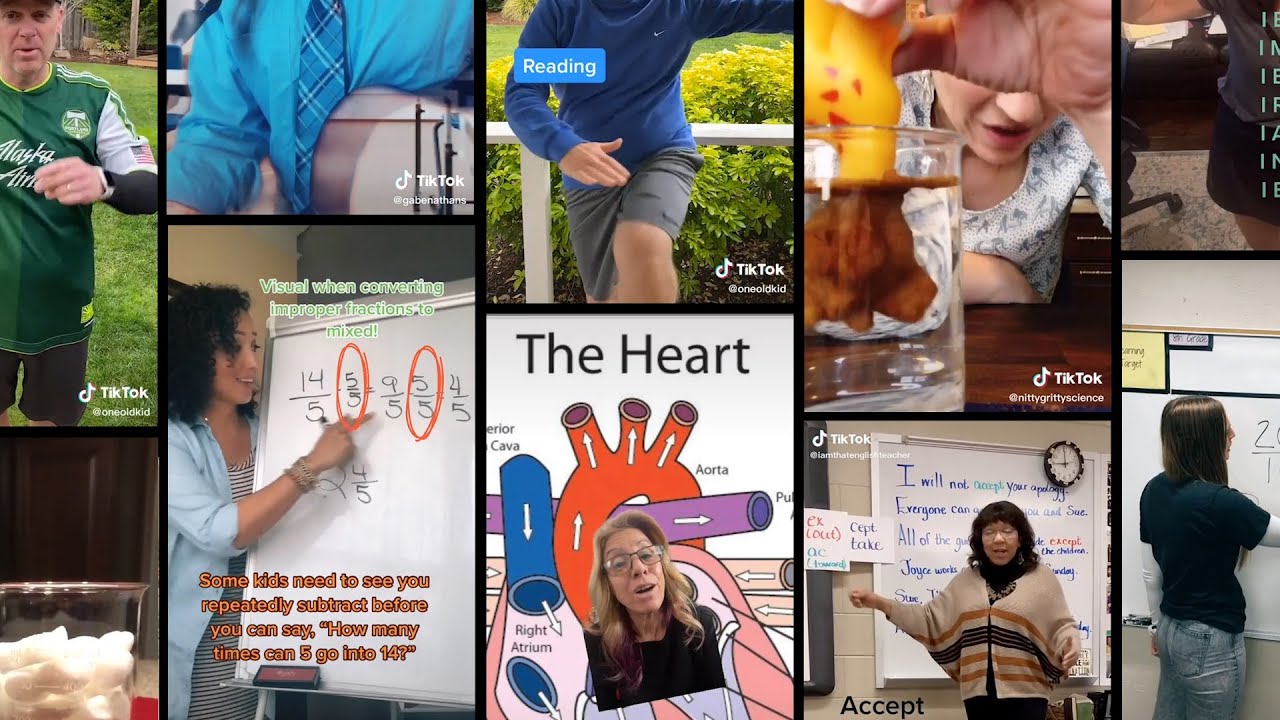

This is not an edge case. According to the Journal's reporting, YouTube is sometimes half of all student web traffic on school devices. A survey cited by YouTube executives found that 94% of teachers have used the platform in their roles. More than 88% of US public schools have issued 1:1 devices; Chromebooks (primed for YouTube by the way) hold around 60% of the K-12 mobile device market.

What none of these figures captures is what is actually happening cognitively while the watching is going on. To answer that, two shifts have to be in view: 1.) how students now watch, and 2.) how the medium itself has changed. I've been researching this for months now, trying to figure out how to apply the science of learning to a media ecology that did not exist when most of that science was done. What follows is a long post, an attempt to set out what we still know, what we don't, and what educators, parents and designers should do in the meantime.

What we now know about how students watch video

On the student side, video learning is now embedded in a thicket of habits that simply were not part of the laboratory paradigm from 30 years ago and which will seem alien to anyone over a certain age. Speed-watching at 1.5x or 2x is the default rather than the exception. Captions are on by default, even for native speakers, often with one word highlighted in yellow. The phone is usually the second screen, and often the first. Cognitive offloading to AI assistants is a routine reflex, not an event. Students arrive at an instructional video with priors built from TikTok, YouTube, and the algorithmic feed, which means their tolerance threshold for what counts as cognitive load, or, conversely, as boredom.

On the medium side, the format itself has drifted from the narrated PowerPoint that multimedia studies treated as the canonical multimedia stimulus in 2001. The dominant grammar of educational video in 2026 is short-form, jump-cut, performer-on-camera, dense with kinetic typography, and increasingly AI-generated where convenient. Many videos are now delivered by avatars who do not exist. The decisions about face, pace, length and ornament are being made by content creators raised on a radically different set of YouTube production conventions, rather than instructional designers raised on cognitive load theory.

Need for speed

Begin with speed. Speed-watching is the most empirically settled of the new behaviours, and the picture is more interesting than the simple "comprehension is preserved at 2x" headline would suggest. Murphy and colleagues' 2022 work at UCLA established the boundary: comprehension is preserved at 1.5x and 2x, with measurable degradation appearing at 2.5x and a steep drop beyond that. Their survey of UCLA undergraduates put the proportion of speed-watchers at 85% several years ago, and that figure has now ossified into a generational default.

Three findings from the last eighteen months complicate the simple story. A 2026 Frontiers paper shows that perceived clarity drops before objective comprehension does: students believe they are losing information at 1.5x even when they are not, and respond by speeding up further to compensate, which is precisely when comprehension begins to fail. Ahn and colleagues, working in Applied Cognitive Psychology in 2025, find that face-on-screen confers no benefit at higher playback speeds and may compete for attention when speech is already compressed. This is one of the few studies that addresses the boundary-condition question directly, and it is not encouraging: the instructor-presence effect that Mayer's tradition documented may simply not survive the playback conditions in which video is now consumed.

A third paper, from Computers in Human Behavior in early 2025, finds that even where comprehension is preserved, metacognitive monitoring degrades. Students do not know what they are missing. The implication is that speed-watching is fine for material the student already partly knows, and particularly poor for material at the edge of their schema, which is the material instructional video is most often used for.

Captions are now the default

Then there is the caption layer. To my knowledge no published study has tested caption-on viewing during instructional video specifically, but the underlying behavioural data is unambiguous. Stagetext's UK survey reports that 80% of 18-to-24-year-olds use subtitles "some or all of the time." Preply puts Gen Z subtitle usage at 70%, against 38% for boomers. Netflix has 80% of its members regularly using subtitles or captions. The reasons go well beyond accessibility: muddled audio mixing in modern productions, accent comprehension, viewing in noisy environments, and the dual-channel reading-while-watching habit conditioned by TikTok, where captions are baked into the medium rather than offered as an option.

This intersects awkwardly with the redundancy principle, which warns against on-screen text duplicating speech under conditions of split attention. Internal reporting from the University of Bristol's Digital Education Office on the accuracy of automatically generated captions for online learning materials suggests something subtler than the principle would predict: students may now process captions as part of the audio channel rather than a competing visual one. The boundary condition for the redundancy effect appears to have shifted under generational habituation.

Worth noting that the redundancy principle was never one of Mayer's strong findings. In his 2024 retrospective, it sits at d = 0.10 across 12 tests, among the weakest in the catalogue. The principle is being asked to do work in this argument that it never did particularly strongly even in the laboratory conditions in which it was first established.

The second screen

The third behavioural drift is the second screen. Industry data has 95% of consumers holding their smartphones while watching television, and 81% of students multitasking while doing homework. The educational research base is more cautious but consistent: multitasking during instructional content depresses transfer (the ability to apply what was watched to new situations) more than it depresses factual recall. Students can still tell you what was said. They cannot do anything with it. The Information Systems Research paper "From Smartphones to Smart Students" finds the now-canonical pattern, namely that any concurrent device use during instruction reduces comprehension, with a larger effect on higher-order outcomes. A more uncomfortable finding from the qualitative literature on Gen Z multitasking is that students do not experience the second screen as multitasking at all. They experience it as the floor of cognitive engagement, with single-screen attention feeling under-stimulated.

The rich history of educational video

Twenty years ago I watched Kenneth Clark stand in front of Chartres Cathedral for thirteen hours. Civilisation: A Personal View had been broadcast a quarter-century before I encountered it on a BBC repeat, but the series completely fascinated me, and changed how I thought about Western art, history and the long sweep of European intellectual life in ways I am still drawing on.

A few years later, reading American literature for my undergrad degree, the thing that catalysed my understanding of how modern America was formed, more than almost any seminar, was Ken Burns's The West. Eight hours across nine episodes, voiceover narration over still photographs, primary sources read aloud, Peter Coyote as the unobtrusive through-line. I came back to it repeatedly during the degree and after, and it did more for my sense of the geographical, racial, and cultural substrate of the literature than almost any classroom encounter. Later I watched The Civil War and was utterly entranced by Shelby Foote and his slow Mississippi drawl, which made the war feel less researched than personally remembered.

These videos brought the past to life for me in ways inseparable from how they were made: long, slow, deliberate, by people whose authority over the material had been hard-won and earned across decades.

Clark was a cultural tourist guide. A mind moving through history and reporting back what it found to 1970s British living rooms. But a new incarnation of that cultural tourist guide has emerged, one which makes the additional claim of being educational and so is worth examining here. Chloe vs. History is perhaps the most successful example: a warm, personable young woman who travels through history reacting to what she finds, with over 151,000 subscribers across TikTok and Instagram. But unlike Clark, she is entirely AI-generated and I suspect this is one reason it's hard to learn anything from her.

Learning has a lot to do with the brain trying to reconcile multiple elements, often competing ones. (This for me is why cognitive load theory is one of the most important frameworks for instructional design). So my hunch is that if the brain is trying to process a human face which it consciously knows is fake but which it instinctively feels is real, this creates a kind of perceptual incongruence that might not be good for learning.

I see this as a kind of uncanny valley, but one with real pedagogical consequences in the sense that every bit of working memory the brain spends reconciling that incongruity is working memory not available for the actual content. If the avatar's face is doing background work to be parsed, the schema-building work the video is meant to support has fewer resources to draw on.

However, we do have examples of AI generated/assisted content which is able to bring the past to life in ways that are truly incredible and genuinely educative. The cleanest example is Peter Jackson's They Shall Not Grow Old (2018). Jackson used machine learning to interpolate frame rates, colourise, and stabilise a hundred-year archive of First World War footage from the Imperial War Museum. He hired forensic lip-readers to verify what the soldiers were actually saying so the audio reconstructions matched the historical record. Foley artists added period-correct sound. The AI was doing the technical restoration; the historical content was the soldiers themselves.

The film succeeded I think, for the same reason Burns's The Civil War succeeded: a careful intelligence at the centre of it, organising real material with patience. It was screened in cinemas, used in schools, and treated seriously by WWI historians. It made a generation that had grown up seeing WWI as a sepia abstraction encounter the actual soldiers, the same age as them, looking back at them.

The AI layer: a mixed picture

Cognitive offloading to AI assistants is the most active growth area in education research, and the 2024 to 2026 record is mixed in a way that is actually clarifying. On the negative side, the PNAS 2025 paper on generative AI without guardrails harming learning in high-school maths is the strongest single piece of evidence that ungated AI use during practice degrades subsequent independent performance. A 2025 RCT in Social Sciences & Humanities Open finds matched effects in adult learners. The recurring pattern across that literature is that the same tool acts as enhancer or crutch depending on how it is used.

Among the positive findings, a 2025 quasi-experimental study in Forum for Linguistic Studies reports that students who delegated lower-order tasks (mechanics, formatting) to AI showed greater improvements in standardised critical-thinking assessments. A Scientific Reports paper on preservice teachers finds the same pattern: strategic offloading correlates with academic gain, passive offloading does not. The single empirical finding most worth carrying into the design conversation comes from a Frontiers paper on differential effects of GPT-based tools: the form of the interaction matters more than the presence of AI itself, and the effect is moderated by prior ability. Low-performing students benefit most from Socratic-style chatbots that engage them in dialogue; high-performing students are harmed most by summary tools that do the cognitive work for them.

The newer and more under-researched behaviour is the substitution of AI summaries for engagement with source material. Survey data puts university student AI use at 86–92% depending on geography and definition, with summarising readings the second most common use case after general help with assignments. For instructional video specifically, this matters because the behaviour to design around is no longer "students watch the video," but rather "students watch the video, ask the AI to summarise it, and revise from the summary."

What the medium has become

The format-side drift is less well evidenced than the behavioural drift, with two exceptions: short-form video, and AI-generated instruction.

The short-form picture has matured fast. The August 2025 medRxiv systematic review The Impact of Short-Form Video Use on Cognitive and Mental Health Outcomes, the 2025 narrative review on short-form video and sustained attention, and the 2026 systematic review on short-video addiction in adolescents converge on the same finding. Heavy short-form video use is associated with measurable decrements in sustained attention, working-memory efficiency and inhibitory control, with effect sizes large enough to persist after controlling for prior cognitive ability and family background.

However, algorithmic delivery is the format shift the educational research literature has barely engaged with. The communication-and-media literature is more useful here. A 2025 piece on algorithmic recommendations in young people's everyday lives finds that adolescents experience the algorithm as an environment rather than as a tool, and report a reduced sense of choice over content selection.

The implication for design is that sequencing assumptions baked into traditional curriculum (we will show this clip after that one) have no purchase when the medium is feed-driven. A video can succeed brilliantly as a TikTok and fail entirely as the third unit of a sequenced course, because the medium expects each piece to stand alone.

Then there is AI-generated instructional video, where the empirical base has grown rapidly. Xu and colleagues in the 2025 British Journal of Educational Technology find equivalent cognitive learning outcomes between AI-generated and human-instructor video, with a small advantage for AI on retention and no significant difference on transfer. A 2025 Frontiers rapid review notes that 72% of students in one experimental group did not recognise that their instructor was artificially generated.

The implication is significant I think. The instructor-presence literature was built on the premise that the instructor is a human being whose social, emotional and pedagogical signals carry information. AI-generated instructors decouple presence from instructorhood: the face is real to the viewer but not real in any other sense. None of the canonical instructor-presence findings have been re-tested under this condition.

What to do in the meantime

Taken as a whole, I think the unit of analysis is no longer the video but rather the learning episode. Speed, captions, second screens and AI summarisers all modify the encoding conditions in ways that no scaling of CTML's principles can fully anticipate. Design decisions made under the assumption of attentive single-screen viewing are designing for a learner who is statistically rare. Treating the video as a self-contained intervention, when in practice it is being consumed inside a noisy multi-screen, multi-tool environment, is the central category error in current educational video design.

The design lever with the most empirical support for protecting learning under these conditions is embedded retrieval and prequestioning. The prequestion literature is now better characterised than at any previous point. Carpenter and Fraundorf's 2025 multilevel meta-analysis establishes that prequestions reliably enhance learning of targeted content (g = .66) but do not generalise: they do not increase learning of incidental material in the same resource (g = .01). Feedback amplifies the effect substantially.

The strongest empirical base is not rhetorical. In Mayer's own 2024 catalogue of fifteen design principles, the generative activity principle sits at d = 0.71 across 44 experimental tests, the largest evidence base for any single principle in the entire CTML edifice.

Three questions before "I learned so much from this video"

- What kind of knowledge is being claimed? Is it factual recall, procedural-motor, conceptual understanding, or transferable expertise? The format has different empirical credibility at each of these levels. It is most defensible at procedural-motor, plausible at factual recall with repetition, weak at conceptual understanding, and entirely speculative at transferable expertise.

- What is the cognitive mechanism that produced the claimed knowledge? Was there retrieval practice? Was there elaboration? Was there generation? Did the video pause for self-explanation? Was the content revisited? Was the learner tested? If the answer to all of these is no, the only mechanism on offer is passive encoding from a fluent presentation.

- What would falsify the claim? Asked to write a detailed explanation of the topic without rewatching the video, can the learner produce one? Asked a transfer question that requires applying the principle to a new case, can the learner answer? If not, the claim of having learned is the fluency illusion.

What the format is genuinely good for

1. Procedural-motor content

Höffler and Leutner's 2007 meta-analysis found that animation outperforms static pictures with d = 1.06 specifically for procedural-motor content where the learning objective involves spatial transformation over time. Many YouTube tutorials are exactly this: how to fix a tap, how to truss a chicken, how to perform a free-throw, how to wire a plug.

2. Vocabulary and single-fact acquisition with repetition

Microlearning at the TikTok scale can deliver discrete facts repeatedly enough to embed them, particularly in language-learning contexts where the learner returns to the same vocabulary repeatedly.

3. Curiosity ignition

The format is unusually effective at producing the moment of "I want to know more about this," which is a precondition for sustained engagement with a topic. A child who watches a Mark Rober video about marble runs is arguably more likely to investigate physics than a child who has not.

4. Exposure to topics that would not otherwise be encountered

The discovery surface of YouTube and TikTok is wide. People learn that quantum entanglement, octopus cognition, the structure of the bond market, or the history of the Antikythera mechanism exist as topics worth thinking about. This is genuine information enrichment, even if the depth is shallow.

5. Affective relationship with subject matter

A child who develops parasocial warmth towards Hank Green is more likely to identify with science as a domain that is interesting, accessible, and culturally legitimate. The empirical case for this as a learning effect per se is weak, but its role as a precondition for later learning is plausible.

Closing

Educational video, in the form most students now consume it, has run ahead of the science designed to evaluate it. The field is in roughly the position medicine would be in if the only randomised trials had been conducted on a population that no longer existed. The principles are good. The patient has changed. Designers, parents and educators have to make decisions every day about whether to accept the medium as it now is, or whether to push back against the conditions that have made it less educative than it could be. I do not think those decisions can wait for the literature to catch up. They are being made by default, by those with the budgets to commission video and the algorithms to distribute it, and the burden of pushing back falls on those of us who still think learning is a more demanding cognitive activity than the current medium is willing to acknowledge.